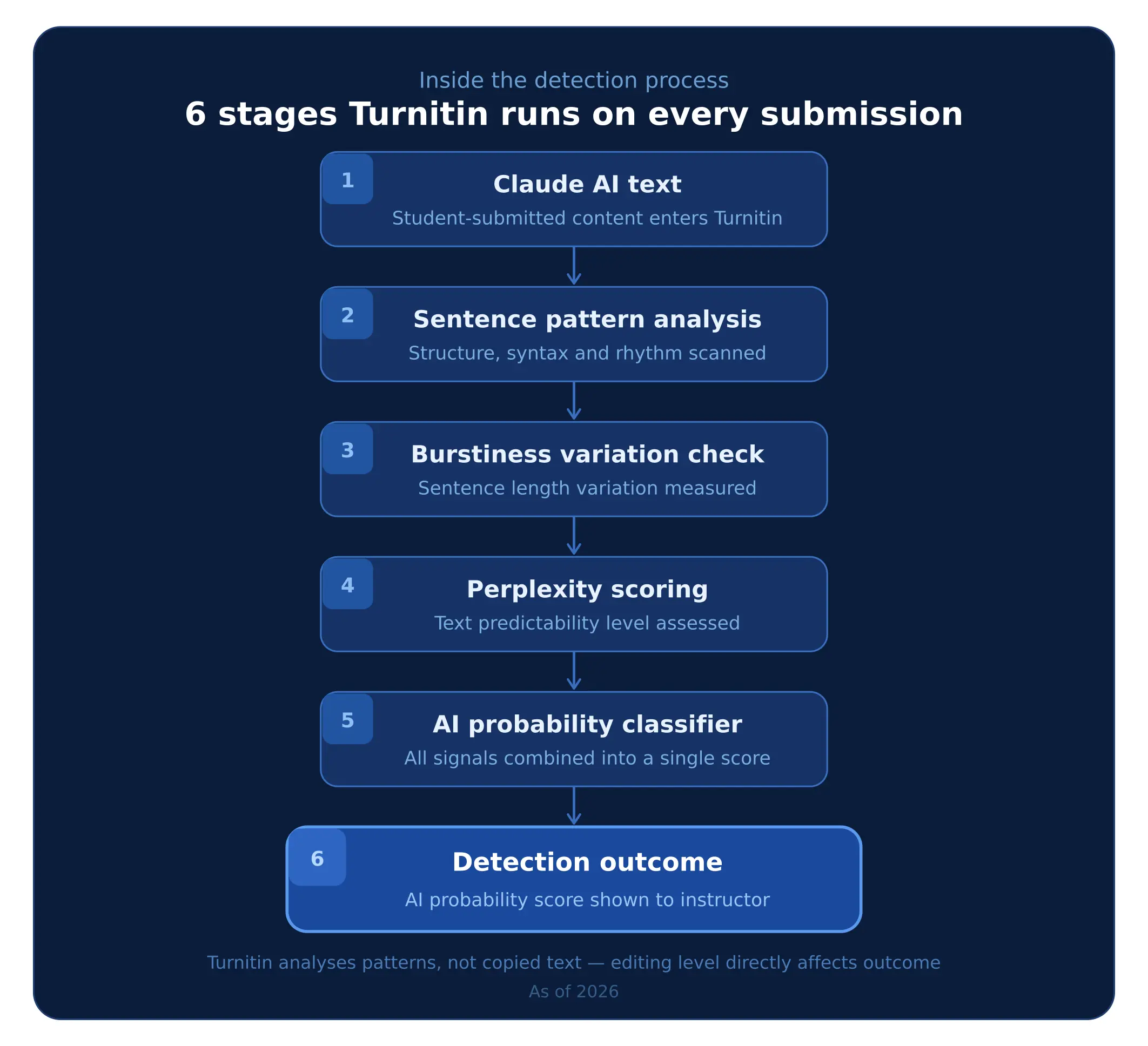

How Turnitin Detects Claude AI Content

Figure: Simplified workflow showing how Turnitin analyses AI-generated writing patterns

The above-given 6-step infographic shows how Tunitin processes writing that may come from Claude AI before reaching a final decision. This helps explain why students often ask whether “Is Claude AI detectable” through pattern-based analysis systems. Well, the first thing it studies is sentence structure patterns to understand how the content is formed, including how ideas connect.

- Explore our guide on AI academic writing tools for students.

Then it evaluates variations in sentence length and writing flow, since human writing usually shifts in pace while AI-generated texts remain consistent. After this, Turnitin applies a predictability check, known as perplexity scoring; it measures how easy the text is to anticipate. And now if writing appears overly predictable or smooth, then it increases the likelihood of being flagged as AI-generated. At the end, all these signals are combined to produce an overall detection estimate in percentage rather than fixed yes-or-no results

Turnitin’s Two Detection Systems

A key distinction many students misunderstand is that Turnitin does not only rely on a single method. It operated through two separate systems.

- Plagiarism Checker → This identifies copied or matched text by comparing submissions with academic sources, published material, and web content.

- AI Detector → This focuses on writing patterns such as structure, predictability, and sentence variation to estimate whether the content may be machine-generated, even if it is original.

This distinction is important because many students confuse plagiarism detection with AI detection. Now even your submission is fully original, but it can still be flagged if its writing patterns match AI-generated structure. Well, this distinction also clarifies why detection results are not always consistent across different types of writing and academic submissions.

Detection Accuracy: Can Turnitin Reliably Identify Claude AI?

Well, Turnitin can identify writing patterns linked to Claude AI, but still, its detection accuracy is not consistent in every situation. Because Turnitin relies on probability signals that change results depending on whether text is submitted directly, edited by a student or combined with human writing. Here this section compares detection likelihood across common submission settings, explains how Claude performs against other AI tools, and shows whether editing reduces detection risk. This section explains how Turnitin AI detector accuracy Claude results change across editing levels and submission types.

When Can Turnitin Detect Claude AI Content?

Detection likelihood changes based on how Claude-assisted writing is prepared before submission, as shown below.

|

Situation |

Detection Likelihood |

|

Raw Claude output submitted directly |

High |

|

Light editing applied |

Moderate |

|

Heavy rewriting by student |

Low–Moderate |

|

Mixed human + AI writing |

Inconsistent |

|

Outline or structure assistance only |

Low |

These differences happen because Turnitin analyses writing behaviour, not just originality. When students submit text that closely follows AI sentence rhythm and structure, detection signals become stronger. As more personal rewriting is added, those signals become weaker and less predictable, which explains why accuracy can change from one submission to another.

Claude vs Other AI Tools: Detectability Comparison

Turnitin detects Claude AI and ChatGPT differently because models produce distinct writing-pattern signals across student submissions.

|

AI Tool |

Detection Strength by Turnitin |

|

ChatGPT |

Very High |

|

Claude |

Moderate–High |

|

Grok |

Moderate |

|

Perplexity |

Moderate |

In practice, Turnitin tends to identify direct-output essays from ChatGPT more consistently than those generated with Claude AI, especially when students submit responses with minimal rewriting. Outputs from Grok and Perplexity AI are often shorter, mixed with retrieved information, or used for planning support, which makes detection behaviour less consistent across full assignments. This difference becomes clearer in ChatGPT vs Claude detection comparisons and is explained further in “Can Turnitin Detect ChatGPT” detection performance in Turnitin shows why results can vary across AI-assisted submissions.

Can Turnitin Detect Edited Claude AI Content?

Editing depth affects how consistently detection signals appear in submitted assignments.

Risk Summary: Detection Likelihood Based on Editing Level -

|

Risk Level |

Scenario |

|

High Risk |

Submitting full Claude-generated essay without edits |

|

Moderate Risk |

Lightly edited Claude output |

|

Low Risk |

Using Claude for outline or structure planning only |

Detection results usually become less consistent as students reorganise ideas, which also explains why students ask whether does Turnitin detect AI paraphrasing after editing generated drafts. Variation across longer assignments can further reduce signal consistency, so reports should be interpreted as indicators rather than final conclusions about authorship.

When Turnitin May Not Detect Claude AI Content

Turnitin does not identify Claude-assisted writing consistently in every submission. Detection becomes less reliable when the final assignment reflects clear student reasoning, subject knowledge, and structural rewriting rather than directly generated text. Detection probability often changes after structured refinement, similar to careful academic revision through an AI content editing service, where the submitted draft no longer follows typical AI response patterns.

Turnitin Detection Is Less Reliable When:

- the assignment is very short (under 300 words)

- writing has been heavily rewritten by the student

- Claude was used only for outline or planning support

- human and AI writing appear in different sections

- technical or discipline-specific explanation dominates the response

In these situations, detection signals become less consistent across the document, so results should be interpreted cautiously rather than treated as fixed outcomes.

AI detectors sometimes flag human writing incorrectly because predictable academic sentence structures can resemble machine-generated text patterns. Detection reports should therefore be read as probability indicators, not proof that AI writing was used. Understanding this helps students interpret feedback more confidently and avoid unnecessary concern when reviewing flagged passages.

Claude Version Differences and Their Impact on Detection

This explains why answers to can Turnitin detect Claude AI may differ depending on which version supported the assignment draft. Newer versions typically produce more natural academic structure, which can change how detection signals appear across assignments.

|

Claude Version |

Detection Difficulty |

Key Reason |

|

Claude 2 |

Easier to detect |

More predictable sentence structure and repeated phrasing patterns |

|

Claude 3 |

Harder to detect |

Stronger paragraph flow and improved transition variation |

|

Claude 3.5+ |

Hardest to detect (current versions) |

Most natural academic tone and highest structure flexibility |

Recent Claude versions generate writing that varies sentence length more naturally and maintains smoother argument flow across sections. This reduces the consistency of repeated structural patterns that detection systems rely on when analysing extended responses.

As a result, assignments supported by newer Claude versions may produce less predictable detection outcomes than those created with earlier releases. This explains why detection reports sometimes differ even when students use the same tool for similar coursework tasks.

Lecturer Review vs Turnitin AI Detection: Which Is More Reliable?

Universities do not rely on detection software alone when reviewing possible AI-assisted writing.

|

Detection Method |

How It Works |

Reliability |

|

Turnitin AI Detector |

Analyses sentence structure and statistical writing signals |

Moderate: may produce false positives |

|

Lecturer Judgement |

Evaluates writing style consistency across submissions |

High: especially when prior work exists |

|

Combined Review |

Software signals checked with submission history |

Strongest: standard practice in UK universities |

Lecturers often recognise a student’s writing style from earlier coursework. Sudden vocabulary changes, unusual argument depth, or shifts in structure across assignments can raise concerns even when software indicators remain moderate. Because lecturers evaluate the assignment within its academic context, their judgement plays a central role in authorship decisions.

For this reason, a detection flag alone is rarely treated as final evidence. Instead, results are interpreted alongside expectations linked to academic integrity in UK guidance before any action is considered. When software indicators and lecturer evaluation point in the same direction, institutions treat the conclusion as more reliable and consistent with normal academic-integrity review procedures.

Safe Ways Students Can Use Claude AI Without Academic Risk

Universities allow AI tools for support tasks, but the submitted assignment must still show your own reasoning and subject understanding.

Acceptable Uses (Low Academic Risk)

- generating topic directions before choosing your own argument

- building a rough essay structure that you later develop yourself

- improving grammar in drafts you have already written

- checking referencing format after selecting sources independently

- simplifying difficult readings before writing your own explanation

Structured proofreading support such as Assignment Editing Services can also help improve clarity and academic expression without changing authorship ownership, which keeps the submission aligned with normal university expectations.

Risky Uses (High Academic Risk)

- submitting Claude-generated paragraphs as part of your assignment

- rewriting journal articles through AI instead of interpreting them yourself

- paraphrasing full coursework using automated tools

- asking AI to “humanise” or disguise generated academic writing

Most universities are not checking whether AI was opened during planning. They are checking whether the final submission reflects your thinking, your structure, and your interpretation of sources. When those elements are missing, the work may be treated as academically unreliable regardless of how it was produced.

Final Thought

Turnitin can detect writing supported by Claude AI, but results are not always exact and usually depend on how the assignment was edited before submission. Most universities do not rely on software alone. Instead, they review detection signals together with lecturer judgement and academic integrity expectations to decide whether the work reflects a student’s own understanding. As AI tools continue to improve, detection accuracy will keep changing, so students should focus on using these tools for planning and support rather than authorship. For clearer guidance on safe academic use, you can also explore our overview of AI writing tools and university policies.

Well, if you find it hard to ensure AI-free submissions, then you can consider our Native Assignment Help UK experts. They will assist you with your assignment-related challenges and help you with a proper Turnitin report for originality evidence.